Orion AI Training is a high-performance environment for training large AI models, designed for teams that require speed, stability, and full control over compute resources.

Why Orion AI Training

Training large AI models is a costly and resource-intensive process. Every hour of slow or constrained training translates into lost time, wasted energy, and increased budget consumption.

With most global cloud platforms:

- • GPU resources are shared

- • performance is software-throttled

- • access to the latest hardware is region-restricted

Orion AI Factory is built to remove these limitations.

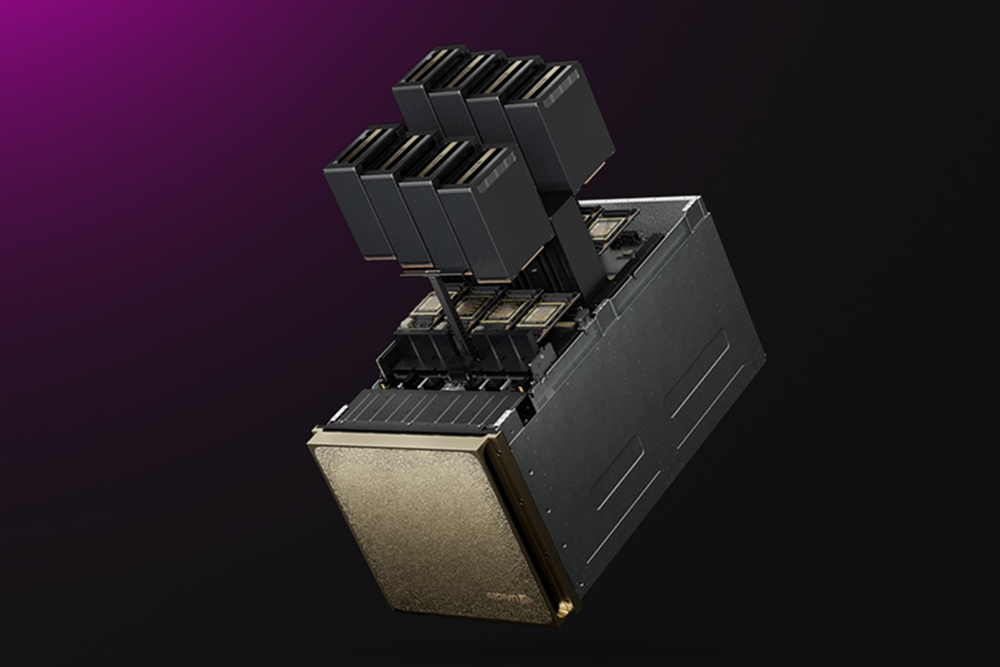

Bare-metal Blackwell performance

Within Orion AI Factory, models are trained on dedicated NVIDIA DGX B200 nodes, with no resource sharing and no throttling.

This delivers:

- • full GPU performance per training job

- • predictable and stable throughput

- • efficient distributed training at scale

Powered by the Blackwell B200 architecture, training workloads can run up to 3x faster compared to the previous generation (H100).